AutoVisit: build a queue of URL that you want to visit automatically This plugin fetches email ids from the Source Code and has no relevance to what you see on the front, so for example, if you see 3 email ids on the website, plugin may fetch more if it finds hidden email ids in the source. ** AutoSave: this feature will store, in the cloud, all the email IDs found on all the pages you are visiting. The tool will launch a robot that will visit the requested pages and extract all email addresses found on those webpages. ** Automation: allows you to build a queue of up to 1.000 URL that you want to visit.

NEW FEATURE 2 : you can add a 5 seconds delay when visiting web pages automatically, so you make sure all pages are loaded and all email ID’s are collected, even when JavaScript are delaying the display of the emails ID's.ĪUTOMATION with AutoVisit and AutoSave. Example: if you want to discover email IDs of people working at Email Hunter, you can step the tool to search for any email address ending with hunter.io or emailhunter.io, this job can be done for any website. NEW FEATURE 1 : the new automation tool can discovers all email addresses for a specific domain name. Extension automatically fetches valid email IDs from the web page, you can copy paste particular email ids you need or export all of them to a text or CSV file. NEW FEATURES: AutoVisit websites and AutoSave Email IDs.Įmail Extractor is a powerful email extraction extension for Chrome. Depending on the data available, few pages do not give much older data.Powerful Extension To Extract E-Mail ID's Automatically From Web Pages. It will exctract all the data from earlier date to current data and create file with current date in its name. The script can extract the all the available backdated data from the Facebook page by passing the required earlier date. The script can only extract the publically accessible data from the Facebook pagess, and results may change based on the privacy settings of the Page as well as the privacy settings of the user who interact woith the page. _feed_YYYYMMDD.csv' file.Īdditional Data Attributes: The first version i.e.(Facebook_scraper.py) creates 3 CSV files with post level data, each post comments data and replies comment data which is replies to respective comments.ĭata Attributes: ģ.

There are two versions of the script, first is to extract the publically available data from the Facebook pages and second version is to extract post data as well as the post insight data. The data extracted with 'get' request is in JSON format and then converted to tabular(row-columns) format in CSV file by parsing each post data in the JSON file.

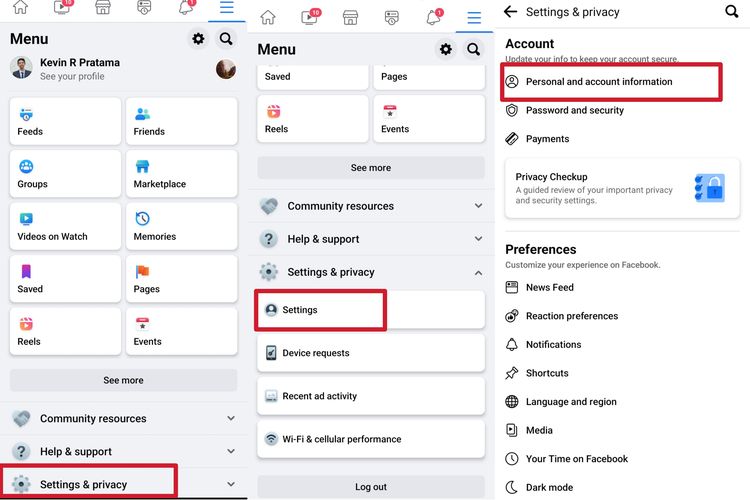

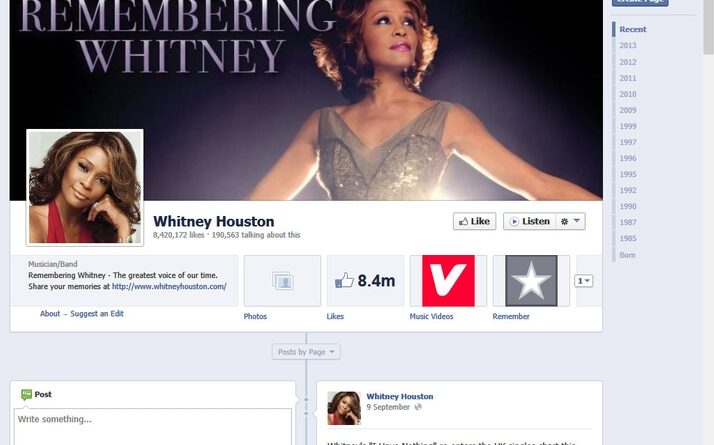

We can pass the necessory parameters to 'get' request to extract the required post data using Graph API. The guide lines to use the Facebook Graph API are given in the 'Graph API Guidelines' file. The script uses Facebook graph API to extract the post level data using the 'get' request. The script is useful to collect the post level data like post message,total number of likes and other reactions, all the post comments and all the replies to the respective comments. The Facebook scraper script can be used to extract the publically available data from the Facebook pages like (techinsider, NASA,etc). The Facebook Data Scraper/Extraction Script

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed